Q: Does it matter which color space I select in my camera?

Answer: Yes. No. Maybe.

Confused? Read on.

With all of the attention properly being paid to color management, I’ve been asked whether it matters which color space one uses in the camera — and if so, which one to use. Without going into an in depth discussion of the concept of “color space”, I’ll try to clear up some of the confusion in this post.

Most modern cameras allow us to choose a “color space”. The most common choices are Adobe RGB 1998 and sRGB; the Adobe space is “larger” but whether it makes a difference, as we will see, depends on how we are going to use our image. Most modern cameras come with a default selection — so whether we choose or not, we have in fact designated a color space. My Nikon D3 comes from the factory set at the narrower sRGB space. As you can see, I’ve reset my camera to Adobe RGB 1998.

Will my selection make a difference? It depends:

Yes.

If I am shooting .jpegs. When we shoot .jpegs, the camera takes the data the sensor captures and “compresses” it. The goal is to create a smaller image file size. In essence, the computer within the camera “edits” the RAW data — deciding what to keep and what to throw out. The color space selection is part of the instruction set the camera uses when making it’s “keep it” or “lose it” decisions.

If we are shooting .jpegs, only, those decisions are irrevocable. That is why .jpegs are called a “lossy format”.

I don’t know too many people who shoot .jpegs only. Most of the people I know, who have cameras capable of shooting RAW, shoot either RAW exclusively, or RAW and .jpeg, concurrently. With the decreased cost of storage — both in the camera and on computers — and the increase in speed of camera buffers, there’s not much reason to forgo the many benefits of shooting RAW, but that’s a rant for another time.

Suffice it to say, Color Space does matter in the in camera conversion to .jpeg.

No.

If I am shooting RAW, exclusively, the color space selection does not matter. Simply stated, a RAW image represents all of the data to hit the sensor. The camera does not sift, winnow and throw away data. So, no matter which color space is selected, there is no instruction followed that says “bring the image down to the specifications of this space”.

With a RAW image, the color space decision only becomes important when we “output” from our post production software. At that time, we take our RAW image and choose a color space which will best suit our output needs — whether it be for the web, computer screen, inkjet printer, or press. Since the conversion of a RAW image does not affect pixels but, rather, is simply creating an instruction set — we will always have that RAW image to work with and can use it in multiple color spaces (so long as we don’t delete it in favor of the .psd, .jpeg, or .tif we may have created from it.) Most people seem to agree that for monitors and web use, sRGB is the space of choice. However, for regular printing both Adobe RGB and sRGB have proponents — and the decision is often made by which profile is used by a lab rather than which better suits the image. For press, the most common space is CMYK.

All we need to know is that when shooting RAW, the color space decision in the camera is not critical.

Well, sort of. There are some exceptions.

Maybe.

You didn’t really expect a simple clear cut answer from me, did you? I’m an academic. We don’t see the world in clear cut terms.

There are situations in which the color space choice in the camera WILL MATTER, even when shooting RAW exclusively.

First, and foremost, it will affect the image we see on our LCD’s. Why? Because that image is a .jpeg, created by the camera from the RAW data. Even if we are shooting RAW only, the camera has to create a .jpeg to show on the LCD and to be the basis of the image’s histogram. For more on this, see the work of the late Bruce Fraser, a man whose book, Real World Camera RAW, was one of the finest and clearest of all of the photography books I’ve read.

(For those of you who have wondered why, when you shoot RAW and set the camera to Black and White — you get a B&W image on the LCD but a full color image when you go to process the RAW — the answer is that the B&W image on the LCD is the camera created .jpeg but, since the image was shot RAW and nothing was thrown out, all of the color data remains for use in post-production.)

And, second, some RAW processors are capable of using the camera’s color space selection as a starting point for adjusting the RAW images. For example, Nikon’s NX2, when opening my RAW .nef images, will start with the color space selected in the camera. (It will also use the “picture control” settings from the camera — things like sharpening, tone compensation, and saturation — as starting points.) The key here is that although the in camera choices are being respected ALL of the RAW data remains which allows us to process the image in any way we want. Nothing has been or will be thrown away. Once more, all we are creating is an “instruction set”; we are not changing pixels.

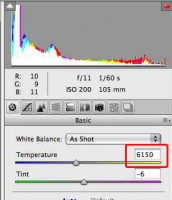

I don’t know about Canon’s software, or the other independent RAW processors, but I do know that neither Adobe Camera Raw nor Lightroom can read the .nef’s in the same way that Nikon’s own software can; neither strictly “honors” the camera’s color space setting in the same way that NX2 does. Is this a big deal? No, not really. First, Adobe has created camera profiles for use in both programs that come very close to replicating Nikon’s starting points. And, second, I don’t find the discrepancy in color space to make a difference in the ultimate RAW processing decisions I make.

So, does it matter which color space you choose? Maybe. Which one do I choose? The larger Adobe RGB space.

But Wait, There’s More: Warning — Geek Alert

To make sure I understood these concepts before writing about them, I went to the Guru of Color Space — Eddie Tapp. Our email chain is attached here for anyone who wants to see how Geeky we both can be. Thanks, Eddie for the help and for letting me publish the emails.

(Copyright: PrairieFire Productions/Stephen J. Herzberg — 2009)

Related Posts

12 Responsesto “Q: Does it matter which color space I select in my camera?”

Trackbacks/Pingbacks

- It’s Tuesday. In August. Have some links. « Central Illinois Photoblog - [...] Stephen J. Herzberg put together a nice little article on Color Space (sRGB, Adobe RGB, Prophoto RGB, etc.) that…

Steve: Excellent and thorough treatment of the subject, as always.

I read the email chain with Mr. Tapp and, I probably shouldn’t say this, but I understood pretty much all of it.

Geeky is good!!

Thanks, Dave!

There are some things that seem so simple and simple things that seem so hard. Color space should be in the latter category.

I’m working on a post on “contrast” — something that has seemed simple to me but actually has more depth. I should have it up this week.

Best,

Steve

I do think that there is an important part of the discussion that has not been covered – the suitability of different color spaces for different types of photos. Both the sRGB and Adobe RGB color spaces have the same number of unique colors they can represent (768 in 8 bit, 196,608 in 16 bit) . Adobe RGB maps these unique colors across a wider gamut, but I have always felt that for portrait work, the wider mapping comes at the cost of finer gradations in skin tones. For my work, which is almost exclusively candid portraits and photojournalism, I have stuck with sRGB the past several years and been quite happy.

Well done, Steve and well stated,

You listed 98 percent. Here are my two cents. ok ok, my 2 percents..

My statement is a simple one. Always begin with the end in mind. Portrait or wedding labs almost exclusively use sRGB. Ink jet in your home studios use Adobe RGB. So, why don’t we simply match our CAMERA setting with our PHOTOSHOP setting (in preferences) and match them both the final output device or printer.

Seems like if you match up all three eliminating any interpolation (expansion of information) or compressing (contraction of information) by any of the three, you’ll get a great print. I have beautiful 30 x 40 wall prints made in sRGB…Same in RGB. Let”s try to keep it simple..

Keep up the great work…Love reading this stuff here..

Tony Corbell

San Diego..

Thanks Tony and Mark for the very helpful contributions.

The ability to get this interaction is why I switched from a newsletter to web based content.

Color pace matters. If you are shooting JPEG+RAW and a fine artist set your camera for Adobe RGB 16 bit. Set your raw processing software to Prophoto RGB. If you are a wedding photographer, or portrait social, and use fuji frontier or Durst Lambda and the like, or have your proofs done at the local Costco or Wall-mart sRGB is the way for you.

THis is a workflow solution. For example fuji frontier, Durst etc. only work in sRGB. SO bigger colorspace doesn’t matter. But should you need to work “heroically” on an image then you have the RAW file that you can change the colorpace. As far as I am concerned there is only one colorspace to work in for editing and that is 16bit Prophoto. If I have to go to 8bit then I convert to Adobe RGB then convert to 8bit from 16 bit.

Greetings all,

I myself shoot RAW – and use Lightroom and love the ability to fine tune RAW images.

My main question is for my photo students…

and their JPEGS- does setting the JPEG color space to Adobe 1998 in camera actually give any more quality to the file?- or is the color space simply mapped better for home inkjet printing using a 6 ink or better printer with real photo grade paper?

IF they were to ——convert—– their sRGB jpegs to Adobe 1998 for printing on “better” inkjet printers with high quality photo paper- would the results be better this way- and no different than if they had set adobe 1998 in camera to begin with.

BTW- Lightroom does a great job fixing problems with “legacy” jpegs- especially when the jpeg expsosure is right on- and can also allow subtle color changes w/o fear of losing color data to keep that “rich color look”.

Richard,

If the camera is allowed to process a JPEG file, than choosing the color space in the camera menu is very important. There is no quality advantage to changing colors space after the process and Adobe RGB 1998 would be a larger starting gamut to print in with Inkjet material. It’s best to have the settings on the camera menu set to the known space you want to be ending in if possible to prevent unnecessary color shifts. When shooing and processing Raw, color space is chosen last on the export process of the digital workflow. Jim DiVitale

Dear Jim, Thanks for this repsonse.

Jpegs shot in the Adobe 1998 color space-

do these actually have a wider gamut of color than RGB- and does this mean the files would be a little larger. I was once told by an expert- forgot who- that ( in camera processed) jpeg is native sRGB

anyway and since it could not contain more data- there was little use to setting Adobe 1998. Hey I’m not doubting you – simply a bit confused. I’m a RAW shooter- but this sort of thing could scare my students that shoot jpegs…

Richard,

Jim is busy moving and asked that I respond to you. (I’ve run the answer past him to make sure it is right.)

As I understand your question, file size is a primary concern of your students; I assume that they are trying to conserve storage space both in their cameras and on their computers.

If that is the issue, Jim confirms that the choice between sRGB and Adobe RGB will not influence the size of the .jpeg and, therefore, will not make a significant impact on space conservation. Said another way, an sRGB .jpeg will not differ, meaningfully, in storage size from one shot in Adobe RGB.

Steve, I was wondering about this issue in Lightroom. I have it, but have not installed it at this time. All my photos at this time are in Adobe RGB.

As an avid photographer, I’m happy to have found your website. It’s always nice to see other’s work and get new ideas; it’s refreshing and gets the mind working. Everyone’s style is so unique. Check out my site if you’d like at http://www.ducktrapphoto.com